EXECUTIVE SUMMARY

Today’s Government organizations have access to more sources of data than ever before. It allows managers to rely on data-driven decision-making processes to solve complex operational and programmatic problems that have a direct impact on their missions. Unfortunately, having heaps of data from multiple sources often doesn’t translate into meaningful knowledge that flows to help one make better decisions. The reason for this disparity is that not all data is gathered and communicated with the same level of quality. Quality can be affected by issues of format, time, business process, location or any dimension that creates credibility and authenticity gaps between the people who collect needed information and the decision makers who have to act upon today’s ‘truth.’

It gets worse. Research shows that poor data quality has proven to be a significant liability to organizations, costing them upwards of 15%-25% of their operating budget, according to internationally-recognized expert and founder of Information Impact International, Larry English [3]. These figures are a direct indication of the grim reality that agency leaders and CXOs must manage every day.

To improve decision making, one must ensure that data can be collected strategically and tactically with the highest level of accuracy, authoritativeness and authenticity. In addition, the user should have confidence that the relevancy of the data collected for specific decision processes, data calls or requests for information are not only accurate but up-to-date.

Today’s agency decision makers need a better solution. One that allows secure collaboration wherever and whenever information can be collected. Anytime, anywhere, and on any device. Leaders will be able to make operational decisions with more confidence when they know the information they have is correct, has come from a trusted source and is current and up-to-date. That confidence is born out of consistency in data quality.

THE QUEST FOR CORRECTNESS

Reliability in the data used to make decisions is the very essence of data quality, and translates to assurance that the information available is not only accurate, but that it is complete and up-to-date. Throughout the course of routine operations (and unbeknownst to both contributors and users of data), information is subject to a variety of processes that adversely affects the very integrity of that data.

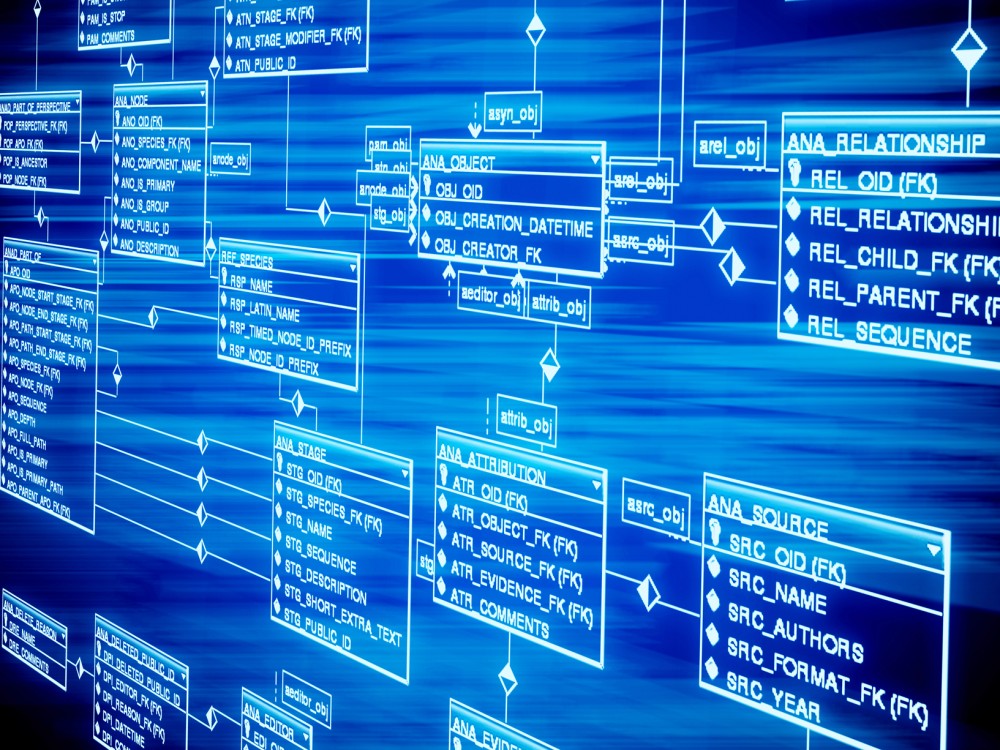

Manual data entry from front-line responders, initial data conversions and system consolidations all create opportunities for data to be compromised, and inaccurate information is often accepted from that point on as the truth and carried through to subsequent communications. Likewise, misrepresentations or complete omissions of crucial information can be suffered in the process of data extraction, transformation, or loading; where high volumes of the organization’s data traffic dramatically increase the potential for these problems [1]. As in the process of information input, data is also vulnerable to internal processing, especially during system upgrades and other internal IT procedures.

A successful platform ensures integrity of such data through a configuration of system abilities including document and metadata management, helping organizations to better understand their data and what software manipulates that data. Without the proper resources, discrepancies can be unaccounted for in these situations, leaving agencies susceptible to costly mistakes based on incorrect representations of information.

Government agencies cannot risk these shortcomings, and an effective solution ensures that they are avoided. With this solution, critical information is consolidated and organized efficiently, not lost or miscalculated among numerous spreadsheets and other work products. A superior solution expedites authentic contributions of quality data from individuals in an organization by consolidating raw data collected by individuals on the front-line and organizing that information so it is available to decision makers when and how they need it.

VALIDATED AUTHORITATIVENESS

In an organization where front-line responders are constantly generating new raw data, the responders represent the first point of data entry into a vast and enormous database. The organization has all of that data at its command, and while none of it is yet validated, eventually that information is culminated into actionable knowledge. The integrity of critical information is often compromised due to an ambiguous chain of custody among operators (commonly the case in many of today’s agencies). It solves this problem, ensuring a clear chain of custody for all information entered into the system. Authorship and ownership of each piece of data is clearly documented and authenticated, simplifying audits and compliance requirements.

An effective platform exercises several capabilities that ensure the trusted movement of critical and specific information through the organization along a trusted chain of custody. By maintaining a full audit trail, future decision makers are able to see what facts have been captured in the database, what’s been done with that data along the way and a roadmap that leads back to the source, showing the full story and ensuring overall data integrity.

All records in the database are essentially governed by a state machine which enables and tracks the review of data by individuals, the approval or denial of said information, as well as alterations, all in order to facilitate the appropriate flow of information further along a chain of custody. Once a record is confirmed as accurate, it can be approved – its custody then to be handled by other key operators in the organization. However, if that same information is found to be inaccurate and consequently denied, the platform’s state machine will prevent further redistribution of that data.

But who decides which individuals along the chain of custody have the authority to approve or deny data to be accurate? Several role-based security layers governed by the organization are placed along the platform’s state machine that designates data accessibility across the organization. This role-based authorization is an essential aspect of the InQuisient platform that allows decision makers to delegate roles and designate edit privileges among different individuals within the entire population. In this way, the capability of the platform directly contributes to the proper flow of knowledge along a trusted chain of custody, ensuring data quality and building the users’ confidence in their data and their data-driven decisions.

“Today’s leaders must have access to the best information available in order to make the best decisions possible. It is essential that they are able to maintain a high level of confidence in the data that provides the foundation for those decisions. Managing and maintaining data quality ensures confidence in the data from the front office to the field office, making it a fundamental measure for organizational success.”

GREATER IMMEDIACY

An effective web-based platform brings a distinct value in terms of enabling immediate access to the real-time data and information needed to make critical decisions. The platform provides users with a browser-based interface that can be accessed 24/7, from anywhere and on any device that uses an Internet connection. This web-based interface facilitates the immediate interaction with data collected for analysis, visualization, and to generate reports. And because the data is always current and up-to-date, the risk of executing decisions based on outdated information is significantly mitigated, granting decision makers the ability to consistently make better decisions with better data.

High quality data translates into the ability to make critical decisions based on detailed information with the confidence that all relevant data is consolidated, accurate, organized and readily available at a moment’s notice. With consistent mission success relying on access to real-time, accurate information, it is evident that data confidence provided by InQuisient meets the needs ofsuch organizational initiatives. This platform provides the functionality and tools required to attain that level of success.

Innovative data management platform assures up-to-date data across the organization. Accessibility to trusted data through the browser-based platform ensures that data-driven decision-making is based on today’s truth. Details can be captured from individual contributors at any time from any location, ensuring that the data is always up-to-date. Data contributors and decision makers can remain confident that the information delivered across the organization is not only secure, but that those individuals within the network will always have immediate access to the most recent information.

SUMMARY

Today’s leaders rely heavily on data-driven decision making to solve complex problems that have a direct impact on their businesses and their missions. Stakeholder concerns regarding the successful operation of their organization typically revolve around so much more than just data quality that it’s oftentimes overlooked. In reality, data quality is one of the most fundamental ingredients for consistent mission success and must be maintained at all times.

About the Author: Mr. Randy DeWoolfson is the Chairman and Chief Innovation Officer at InQuisient. InQuisient’s enterprise data management platform creates order from chaos, enabling the timely, multilateral flow of knowledge across all operational levels. The browser-based platform provides anywhere access to robust, user-driven data management tools that solve specific and emerging challenges. The InQuisient platform provides a clear chain of custody along with the highest standards of data security and integrity. With more than a decade of development and successful implementations across U.S. Federal Government, DoD and the Intelligence Community, InQuisient is trusted by the nation’s most discerning organizations. For more information, visit http://www.inquisient.com/tools/

Leave a Reply

You must be logged in to post a comment.