One our ever-insightful NCDD members, Tiago Peixoto, shared a summary of some important civic participation research that shows that “mixed results” of participation efforts say more when we delineate between “tactical” or “strategic” interventions. We’ve shared Tiago’s piece from his DemocracySpot blog below, and you can find the original here.

Social Accountability: What Does the Evidence Really Say?

![]() So what does the evidence about citizen engagement say? Particularly in the development world it is common to say that the evidence is “mixed”. It is the type of answer that, even if correct in extremely general terms, does not really help those who are actually designing and implementing citizen engagement reforms.

So what does the evidence about citizen engagement say? Particularly in the development world it is common to say that the evidence is “mixed”. It is the type of answer that, even if correct in extremely general terms, does not really help those who are actually designing and implementing citizen engagement reforms.

This is why a new (GPSA-funded) work by Jonathan Fox, “Social Accountability: What does the Evidence Really Say” is a welcome contribution for those working with open government in general and citizen engagement in particular. Rather than a paper, this work is intended as a presentation that summarizes (and disentangles) some of the issues related to citizen engagement.

Before briefly discussing it, some definitional clarification. I am equating “social accountability” with the idea of citizen engagement given Jonathan’s very definition of social accountability:

Social accountability strategies try to improve public sector performance by bolstering both citizen engagement and government responsiveness.

In short, according to this definition, social accountability is defined, broadly, as “citizen participation” followed by government responsiveness, which encompasses practices as distinct as Freedom Of Information law campaigns, participatory budgeting, and referenda.

But what is new about Jonathan’s work? A lot, but here are three points that I find particularly important, based on a very personal interpretation of his work.

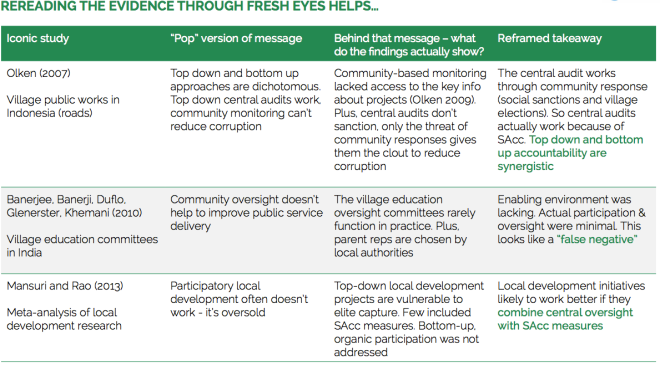

First, Jonathan makes an important distinction between what he defines as “tactical” and “strategic” social accountability interventions. The first type of interventions, which could also be called “naïve” interventions, are for instance those bounded in their approach (one tool-based) and those that assume that mere access to information (or data) is enough. Conversely, strategic approaches aim to deploy multiple tools and articulate society-side efforts with governmental reforms that promote responsiveness.

This distinction is important because, when examining the impact evaluation evidence, one finds that while the evidence is indeed mixed for tactical approaches, it is much more promising for strategic approaches. A blunt lesson to take from this is that when looking at the evidence, one should avoid comparing lousy initiatives with more substantive reform processes. Otherwise, it is no wonder that “the evidence is mixed.”

Second, this work makes an important re-reading of some of the literature that has found “mixed effects”, reminding us that when it comes to citizen engagement, the devil is in the details. For instance, in a number of studies that seem to say that participation does not work, when you look closer you will not be surprised that they do not work. And many times the problem is precisely the fact that there is no participation whatsoever. False negatives, as eloquently put by Jonathan.

Third, Jonathan highlights the need to bring together the “demand” (society) and “supply” (government) sides of governance. Many accountability interventions seem to assume that it is enough to work on one side or the other, and that an invisible hand will bring them together. Unfortunately, when it comes to social accountability it seems that some degree of “interventionism” is necessary in order to bridge that gap.

Of course, there is much more in Jonathan’s work than that, and it is a must read for those interested in the subject. You can download it here [PDF].

You can find the original version of this piece on Tiago’s Democracy Spot blog at http://democracyspot.net/2014/05/13/social-accountability-what-does-the-evidence-really-say.

Leave a Reply

You must be logged in to post a comment.